This repository represents Ultralytics open-source research into future object detection methods, and incorporates lessons learned and best practices evolved over thousands of hours of training and evolution on anonymized client datasets. All code and models are under active development, and are subject to modification or deletion without notice. Use at your own risk.

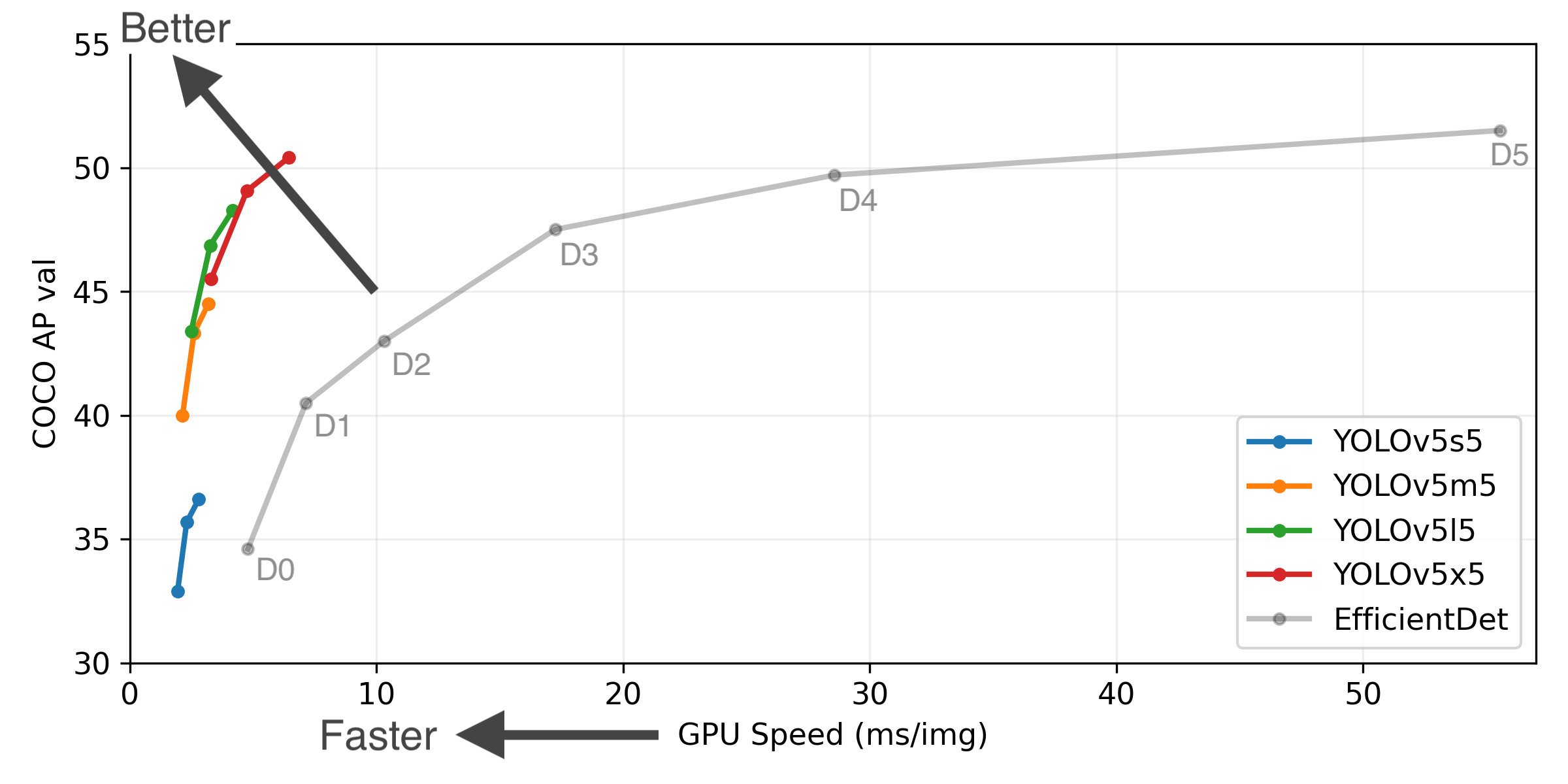

| Model |

size

(pixels)

| mAPval

0.5:0.95

| mAPtest

0.5:0.95

| mAPval

0.5

| Speed

V100 (ms)

| |

params

(M)

| FLOPS

640 (B)

|

| YOLOv5s |

640 |

36.7 |

36.7 |

55.4 |

2.0 |

|

7.3 |

17.0 |

| YOLOv5m |

640 |

44.5 |

44.5 |

63.3 |

2.7 |

|

21.4 |

51.3 |

| YOLOv5l |

640 |

48.2 |

48.2 |

66.9 |

3.8 |

|

47.0 |

115.4 |

| YOLOv5x |

640 |

50.4 |

50.4 |

68.8 |

6.1 |

|

87.7 |

218.8 |

|

|

|

|

|

|

|

|

|

| YOLOv5s6 |

1280 |

43.3 |

43.3 |

61.9 |

4.3 |

|

12.7 |

17.4 |

| YOLOv5m6 |

1280 |

50.5 |

50.5 |

68.7 |

8.4 |

|

35.9 |

52.4 |

| YOLOv5l6 |

1280 |

53.4 |

53.4 |

71.1 |

12.3 |

|

77.2 |

117.7 |

| YOLOv5x6 |

1280 |

54.4 |

54.4 |

72.0 |

22.4 |

|

141.8 |

222.9 |

|

|

|

|

|

|

|

|

|

| YOLOv5x6 TTA |

1280 |

55.0 |

55.0 |

72.0 |

70.8 |

|

- |

- |

Table Notes (click to expand)

- APtest denotes COCO test-dev2017 server results, all other AP results denote val2017 accuracy.

- AP values are for single-model single-scale unless otherwise noted. Reproduce mAP by

python test.py --data coco.yaml --img 640 --conf 0.001 --iou 0.65

- SpeedGPU averaged over 5000 COCO val2017 images using a GCP n1-standard-16 V100 instance, and includes FP16 inference, postprocessing and NMS. Reproduce speed by

python test.py --data coco.yaml --img 640 --conf 0.25 --iou 0.45

- All checkpoints are trained to 300 epochs with default settings and hyperparameters (no autoaugmentation).

- Test Time Augmentation (TTA) includes reflection and scale augmentation. Reproduce TTA by

python test.py --data coco.yaml --img 1536 --iou 0.7 --augment

Requirements

Python 3.8 or later with all requirements.txt dependencies installed, including torch>=1.7. To install run:

$ pip install -r requirements.txt

Tutorials

Environments

YOLOv5 may be run in any of the following up-to-date verified environments (with all dependencies including CUDA/CUDNN, Python and PyTorch preinstalled):

Inference

detect.py runs inference on a variety of sources, downloading models automatically from the latest YOLOv5 release and saving results to runs/detect.

$ python detect.py --source 0 # webcam

file.jpg # image

file.mp4 # video

path/ # directory

path/*.jpg # glob

'https://youtu.be/NUsoVlDFqZg' # YouTube video

'rtsp://example.com/media.mp4' # RTSP, RTMP, HTTP stream

To run inference on example images in data/images:

$ python detect.py --source data/images --weights yolov5s.pt --conf 0.25

Namespace(agnostic_nms=False, augment=False, classes=None, conf_thres=0.25, device='', exist_ok=False, img_size=640, iou_thres=0.45, name='exp', project='runs/detect', save_conf=False, save_txt=False, source='data/images/', update=False, view_img=False, weights=['yolov5s.pt'])

YOLOv5 v4.0-96-g83dc1b4 torch 1.7.0+cu101 CUDA:0 (Tesla V100-SXM2-16GB, 16160.5MB)

Fusing layers...

Model Summary: 224 layers, 7266973 parameters, 0 gradients, 17.0 GFLOPS

image 1/2 /content/yolov5/data/images/bus.jpg: 640x480 4 persons, 1 bus, Done. (0.010s)

image 2/2 /content/yolov5/data/images/zidane.jpg: 384x640 2 persons, 1 tie, Done. (0.011s)

Results saved to runs/detect/exp2

Done. (0.103s)

PyTorch Hub

To run batched inference with YOLOv5 and PyTorch Hub:

import torch

# Model

model = torch.hub.load('ultralytics/yolov5', 'yolov5s')

# Images

dir = 'https://github.com/ultralytics/yolov5/raw/master/data/images/'

imgs = [dir + f for f in ('zidane.jpg', 'bus.jpg')] # batch of images

# Inference

results = model(imgs)

results.print() # or .show(), .save()

Training

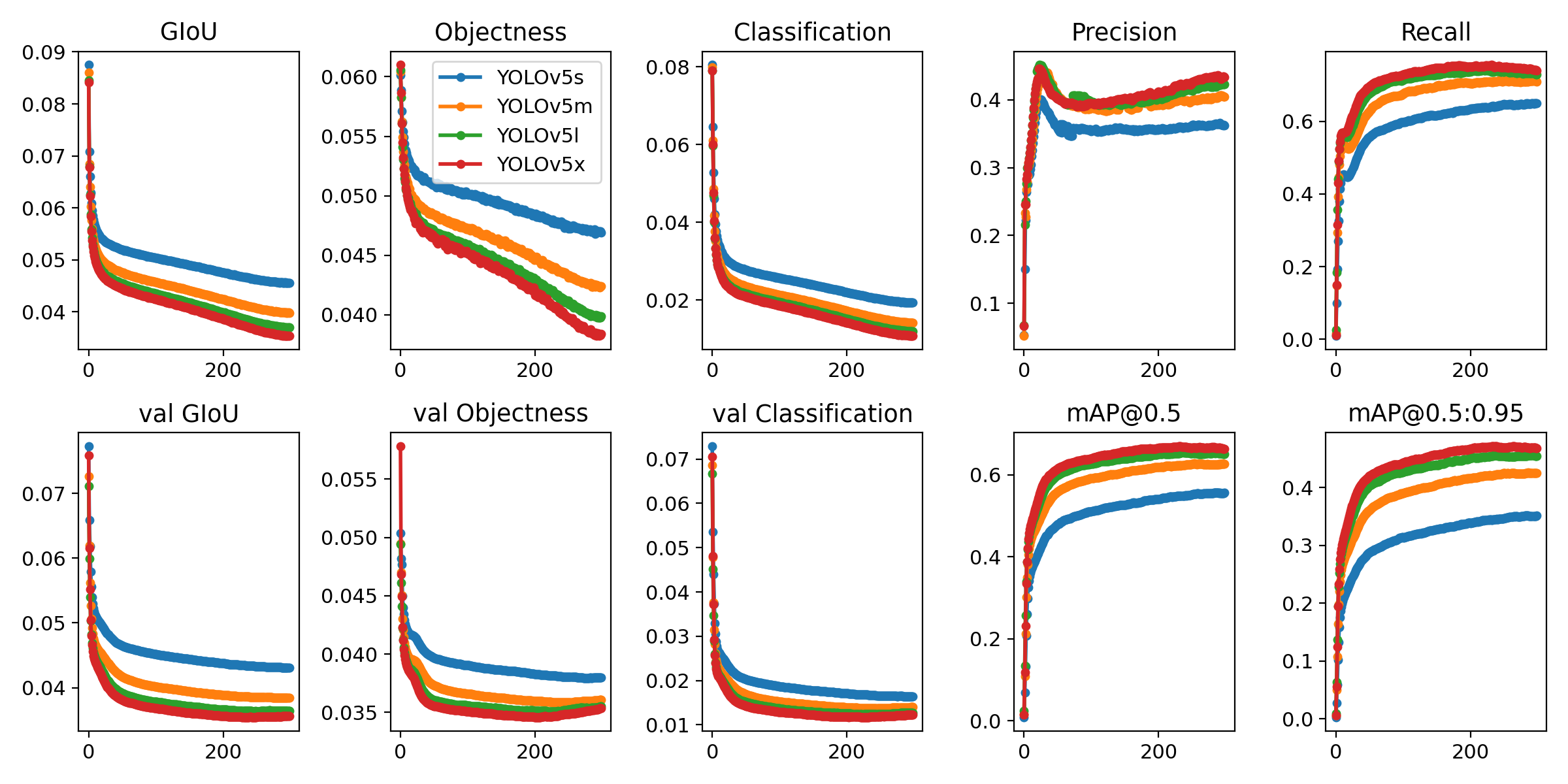

Run commands below to reproduce results on COCO dataset (dataset auto-downloads on first use). Training times for YOLOv5s/m/l/x are 2/4/6/8 days on a single V100 (multi-GPU times faster). Use the largest --batch-size your GPU allows (batch sizes shown for 16 GB devices).

$ python train.py --data coco.yaml --cfg yolov5s.yaml --weights '' --batch-size 64

yolov5m 40

yolov5l 24

yolov5x 16

Citation

About Us

Ultralytics is a U.S.-based particle physics and AI startup with over 6 years of expertise supporting government, academic and business clients. We offer a wide range of vision AI services, spanning from simple expert advice up to delivery of fully customized, end-to-end production solutions, including:

- Cloud-based AI systems operating on hundreds of HD video streams in realtime.

- Edge AI integrated into custom iOS and Android apps for realtime 30 FPS video inference.

- Custom data training, hyperparameter evolution, and model exportation to any destination.

For business inquiries and professional support requests please visit us at https://www.ultralytics.com.

Contact

Issues should be raised directly in the repository. For business inquiries or professional support requests please visit https://www.ultralytics.com or email Glenn Jocher at glenn.jocher@ultralytics.com.